Researchers at UT Austin have created an AI-powered semantic decoder that translates brain activity into text using non-invasive fMRI scans. The system maps neural blood flow to linguistic concepts, allowing it to reconstruct the essence of a person’s thoughts or the stories they are hearing.

TLDR: Scientists at the University of Texas at Austin have developed a non-invasive AI system that decodes human brain activity into text. By analyzing fMRI data with large language models, the decoder can reconstruct the gist of a person’s thoughts, offering a potential communication lifeline for individuals with speech-impairing neurological conditions.

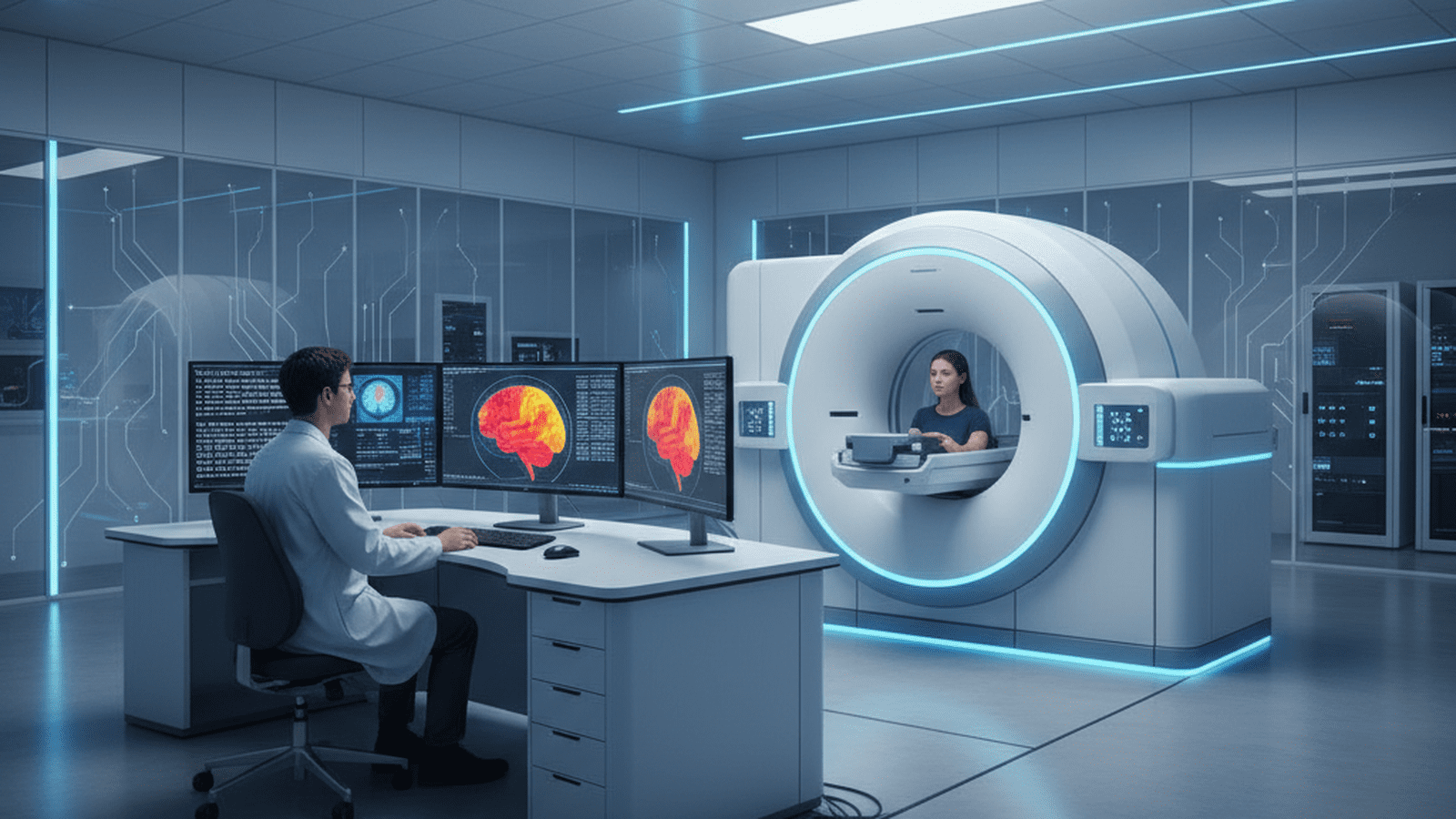

Researchers at the University of Texas at Austin have achieved a landmark breakthrough in the field of neuroscience and artificial intelligence. They have developed a “semantic decoder” capable of translating a person’s brain activity into a continuous stream of text. Unlike previous brain-computer interfaces (BCIs), this system is entirely non-invasive, requiring no surgical implants. Published in the journal Nature Neuroscience, the study demonstrates a sophisticated synergy between functional magnetic resonance imaging (fMRI) and large language models, similar to those powering modern AI assistants. This innovation represents a paradigm shift, moving away from decoding individual letters or motor commands toward interpreting the high-level meaning of human thought.

The system’s foundation lies in fMRI technology, which tracks changes in blood flow and oxygenation levels in the brain. While fMRI provides excellent spatial resolution, its temporal resolution is notoriously slow; blood flow changes take several seconds to peak, whereas human speech occurs at a rate of several words per second. To overcome this lag, the UT Austin team, led by Jerry Tang and Alex Huth, utilized a decoding approach that predicts the most likely sequences of words based on neural patterns. The AI was trained by having three participants listen to 16 hours of narrative podcasts while lying in a scanner. This allowed the system to learn how specific semantic concepts—ranging from family relationships to physical actions—manifested as unique blood-flow signatures in each individual’s brain.

Once calibrated, the decoder could generate text that captured the essence of what a participant was hearing or even thinking. The researchers noted that the system does not produce a word-for-word transcript. Instead, it captures the “gist” of the narrative. For instance, when a participant heard the phrase “I don’t have my driver’s license yet,” the decoder translated the brain activity as “She has not even started to learn to drive yet.” This suggests the AI is accessing the underlying conceptual framework of the thought rather than just the linguistic structure. The decoder proved equally effective when participants watched silent films or mentally narrated their own stories, proving that the brain uses similar pathways for both external perception and internal monologue.

Given the potential for misuse, the research team placed a heavy emphasis on mental privacy. They conducted rigorous testing to determine if the decoder could be used without a subject’s consent. They found that the system requires the active cooperation of the user to function. If a participant resisted by performing distracting mental tasks—such as counting by sevens or imagining a list of animals—the decoder failed to produce any coherent text. Furthermore, a model trained on one person’s brain data could not be used to decode another person’s thoughts, as the mapping of semantic concepts to neural activity is highly personalized. This “biological encryption” ensures that the technology remains a tool for the user rather than a means of surveillance.

The primary goal for this technology is to provide a communication lifeline for individuals who are cognitively intact but physically unable to speak, such as those suffering from locked-in syndrome or advanced Amyotrophic Lateral Sclerosis (ALS). Currently, the reliance on bulky and expensive fMRI machines limits the system to laboratory or clinical settings. However, the researchers are optimistic about transitioning the software to more portable platforms, such as functional near-infrared spectroscopy (fNIRS). fNIRS uses infrared light to measure blood flow and can be integrated into a wearable headset. As the AI models become more efficient and the hardware more accessible, this semantic decoder could eventually offer a permanent, non-invasive voice to those who have lost theirs, fundamentally changing the landscape of rehabilitative medicine.